Migrating Big Data to the Cloud

By Paul Scott-Murphy

Oct 15, 2020

Migrating Big Data

Moving small amounts of data is simple, but moving large amounts is extremely complex. This nonlinear challenge has held back cloud adoption for big data environments that have grown on-premises. This is the well-known “data gravity” challenge, where it makes more sense to add datasets to where they already reside, because it is easier, and because they have more value in proximity to other data.

But the cloud has many advantages over private, on-premises infrastructure: scale, flexibility, and innovation. Bringing big data to the cloud makes sense, but is difficult.

Let’s look at a real-world example of migrating a big data environment to AWS, and analyze what it takes to migrate a 2.5 petabyte, 800-node Hadoop cluster that is in 24x7 use at a major US organization. We’ll compare traditional approaches to data migration with a LiveData strategy that takes data changes into account while migration is in progress, and demonstrate that traditional approaches simply cannot cope with actively changing datasets at scale.

How Big is Big Data?

What does a large, real-world, big data environment look like? Every data storage and processing cluster is different, but an example that can help to explain the unique challenges at scale comes from one WANdisco customer. This organization runs an Apache Hadoop 2.8 cluster in their own datacenter with 800 nodes to service large-scale production data storage, processing, and analytic needs. It holds about 2.5 petabytes of data, growing with more than 4TB of change every day.

Like any production environment, it requires constant operational use to recoup the investments made in the physical infrastructure, operational expense, and associated opportunity costs. Hadoop clusters run hot, and this one is no exception.

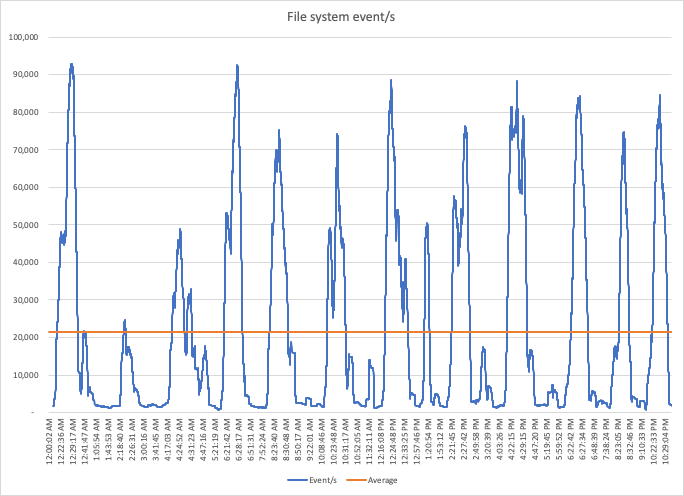

One measure of this activity is the rate of change in the file system. The graph below shows a moving average of file system change operations per second in this cluster over 12 hours. It performed an average of 21,388 file system change operations every second, and had activity peaks around five times that rate.

Big Data Migration

Although this cluster has been operating successfully for years, the organization wants to migrate it to AWS. They want to take advantage of the scale, depth of services, demand-driven operational cost effectiveness, proximity to other data and services, resilience, the community of users and of vendor solutions, security, compliance options, the pace of innovation, and other benefits that come with Amazon Web Services.

But migrating petabytes of actively changing, “live” data can be difficult, particularly when the business depends on the continued operation of applications in the cluster, and access to its data. Any disruption to business operations may prevent a migration from even being attempted, and in this case, continued operation is an absolute requirement.

Requirements

Their initial goal for migration was to bring a selected subset—totaling 500TB over 8.6 million files—of their 2.5 PB to AWS S3, allowing them to run production workloads at scale in the cloud to validate operation and performance before migrating the majority of their cluster data.

This is complicated by the additional requirement to maintain operations at all times during migration, and to constrain the bandwidth used to a subset of their available 10 Gb/s capacity: no more than 3 Gb/s. There is no room for downtime or service disruption for the cluster or other network users.

Cluster Activity

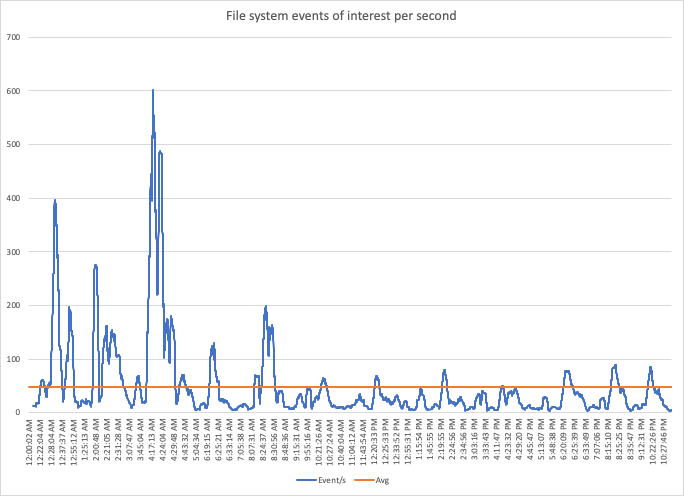

Cluster activity must be considered for a migration that needs to work without downtime. While the total cluster activity is large and measurable, migration planning needs to be concerned with changes made to data sets that are a part of the migration. An example of that for the 500 TB subset of data is shown below over time.

Interestingly, there is a lot of variance in this activity. While the long-term average is about 50 events per second, peaks are ten times larger than that, and also vary over time.

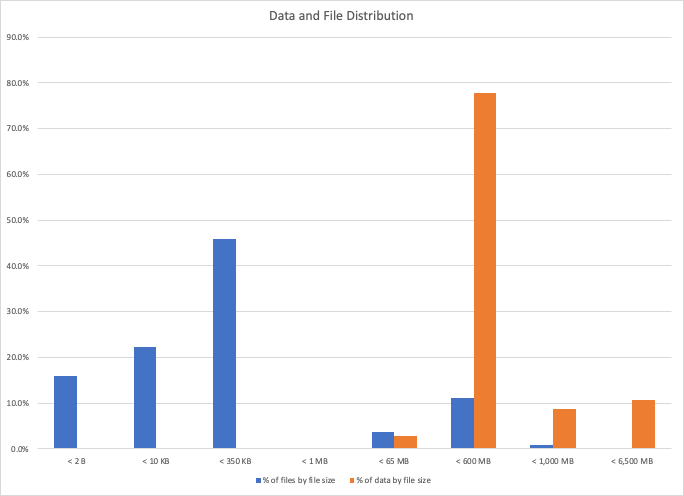

If we assume that the average of 50 events per second is valid, we can then look at the types of files over which that activity occurs. For a sample of 10,000 files, the graph below shows the distribution of file sizes, bucketed into eight groups, and therefore the percentage of data volume that each of those groups contributes to the total amount of data to be migrated.

We can see that while the majority of files are small (84% are less than 350 KB), the majority of data reside in files between 65MB and 600MB in size. Hadoop clusters operate most efficiently when files are at least the size of a single HDFS block, by default 128MB. But in the real world, clusters are not always used efficiently, and this graph is a clear indication that you can expect to see many small files even when most of your data are in larger ones.

What Happens During Data Migration?

Now that we know what the cluster, data, and activity look like, we can understand what needs to happen during data migration:

- Existing datasets need to be copied from HDFS to S3, with each file in HDFS transferred to an object in S3 with an identifier that distinguishes the full path to the source file. This is the same approach used by S3A to represent S3 storage as a Hadoop-compatible file system.

Because we need to migrate a lot of data over a fixed bandwidth (3 Gb/s), this will take time. During that time, the data will continue to change, being added to, modified, moved, and deleted, so: - Changes made in HDFS to those datasets during migration need to be applied to the replica of the data in S3, so that we can continue to operate applications that depend on them throughout. The business cannot stop just to migrate.

Sending 500 TB of data at 3 Gb/s takes 15.4 days. Assuming that changes made to the data throughput don’t materially increase or decrease that size from 500 TB, and with knowledge of the distribution of file sizes and the number of files, over 15.4 days, there will be:

- 66.5 million file changing events, and

- Changes to about 13.3 TB of data (using the median file size of 200 KB).

With a fixed bandwidth, we can calculate the total amount of time required to migrate the data and ongoing changes in the same way as calculating compound interest. The total data that will be transferred is 513.5 TB, and it will actually take 15.8 days.

Migration Limits

With 8.6 million files to migrate and 500 TB of data, migration can be limited by bandwidth, latency, or the rate at which files can be created in the S3 target.

The calculations above assume that there is no cost or overhead in determining the changes that occur to the data that is being migrated. This requires a “Live” data migration technology that combines knowledge of the data to be migrated with the changes that are occurring to it without needing to repeatedly scan the migration source to determine what was changed.

API Rates

AWS states that you can send 3,500 PUT/COPY/POST/DELETE requests per second per prefix in an S3 bucket (Best Practices Design Patterns: Optimizing Amazon S3 Performance - Amazon Simple Storage Service). A prefix is a logical grouping of the objects in a bucket. The prefix value is similar to a directory name that enables you to store similar data under the same directory in a bucket. This migration has 15 prefixes, so at best, the API rate will be 52,500 PUT requests per second. The migration will need to perform one PUT request per file, and one per file changing event, for a total of 75.1 million requests. At the maximum rate, this would take only a small portion of the 15.8 days, so S3 API rate limits will not be a limiting factor.

Latency

Rate Limits

The latency between the cluster and S3, and the extent to which those requests can be executed concurrently may limit operation. A migration solution will need to ensure that sufficient request concurrency can be achieved.

The organization’s cluster is 1,800 Km from their chosen AWS S3 region, which imposes a round-trip latency of 23 ms.

With no concurrency, and a 23ms round-trip latency, 75.1 million requests will take 20 days to perform. This is more than the time required to send 500 TB, so will be a limiting factor without concurrent requests. Any solution needs to execute requests concurrently to achieve 3 Gb/s in this environment.

Throughput Limits

The second impact of latency is the TCP throughput limit that it imposes. This is the standard window-size / round-trip-time tradeoff, where for a fixed window size, increasing latency limits the achievable throughput for a single TCP connection.

Once again, assuming a 23 ms round-trip latency, for a standard packet loss estimate of 0.0001%, an MTU of 1500 bytes, and a TCP window size of 16,777,216, the expected maximum TCP throughput for a single connection is 0.5 Gb/s.

Again, concurrent TCP connections will be needed to achieve throughput at the 3Gb/s rate.

S3 API Overhead

Because the S3 API requires at least a PUT operation per file created, there is overhead in the HTTP request made to create an object that totals about 1800 bytes per request, although this can differ based on the object identifier used. When the majority of files are significantly larger than 1800 bytes, this does not impact throughput, but when many files approximate that size or below, it can have a large impact on the content throughput achievable.

For our file distribution, the median file size is 200 KB, of which 1800 bytes represents a 1% overhead, which is essentially insignificant compared to bandwidth requirements. However, there are limits to the number of concurrent requests that can be dedicated to servicing small files.

Migration Approaches

Traditional Migration

With sufficient use of concurrent requests and TCP connections, there is no impediment to achieving the target 3Gb/s. But we also need to consider how a migration solution accommodates the changes that occur to data while migration is underway.

A migration approach that does not take changes into account during operation will need to attempt to determine what differs between the source cluster and the target S3 bucket by comparing each file/object and transferring any differences after an initial transfer has occurred.

Migration of a subset of data

Migrating 500TB of unchanging data at 3 Gb/s will take 15.4 days. But datasets continue to change during that time. If there is no information available about exactly what has changed, the source and target need to be compared.

That comparison needs to touch every file (8.6 million) and if performed in sequence will take 55 hours. But during that time, data will continue to change, and without a mechanism to capture those specific changes, we will need to return again to the data and compare it in total again.

The average rate of change for a selected 500 TB of the 2.5 PB of total data was just 50 events per second. During 55 hours, we can expect about 10.1 million changes to files, and about 2 TB of data to have been modified.

Migration of all data

The change rate climbs exponentially as the data volume to be migrated increases, what was 50 events per second for 500 TB of data became 21,388 events per second for 2.5 PB of data—a 42,776 % increase!

The comparison of all data will need to touch 5 times as many files, now 43 million, and if performed in sequence will take about 10 days, even before transferring any changed data. At the change rates for our example, this would be another 10TB of data, which itself will take more than 7 hours.

Accommodating that change rate requires sophisticated techniques for obtaining, consuming and processing events of interest if they are needed to drive a LiveData migration solution.

A LiveData Strategy

If the migration technology used does not need to compare data that have been modified during an extended period of data transfer, it can eliminate the gap that exists between source and target, and maintain the target as a current replica of the source data. It requires the combination of change event information with the activities from scanning existing data, and an intelligent approach to bringing those two streams of information together to take the correct actions against the migration target. This is not just change data capture, but the use of a continuous stream of change notifications with the scan of the data that are undergoing change.

The only limit that applies is to ensure that the rate of change to the source data is less than the bandwidth available.

For this organization, that rate of change for their full cluster is about 4.3 TB of data per day, or 0.4 Gb/s. This is well within their available bandwidth, so LiveData migration is a feasible approach, allowing them to move from an RPO of 10 days to essentially zero.

They used WANdisco’s LiveData Migrator to migrate data from their actively used cluster to AWS S3. It combines a single scan of the source datasets with processing of the ongoing changes that occur to achieve a complete and continuous data migration. It does not impose any cluster downtime or disruption, and requires no changes to cluster operation or application behavior.

LiveData Migrator let them perform their migration without disrupting business operation, and ensured that datasets were transferred completely, even while under active change in a very large and busy Hadoop environment.

Summary

We’ve explained how a large US organization was able to achieve a migration for an actively-used, 800-node, 2.5 PB Apache Hadoop cluster to AWS S3, and do it without any downtime or disruption to applications or the business using a LiveData strategy and WANdisco’s LiveData Migrator product.

Most importantly, advances in migration technology means that the capabilities now exist to migrate actively-used Hadoop environments at scale to the cloud. You can take advantage of the accelerating advances in AI, machine learning, and constantly reducing storage costs in the public cloud for even your largest analytic needs, using all the data you have on-premises.

Please visit wandisco.com, or visit the AWS Marketplace to find out more about the details of LiveData Migrator, and try it for yourself for up to 5TB of data at no cost.

APN Partner Spotlight

WANdisco is an advanced tier ISV Migration competency APN partner, available in the AWS marketplace with a proven data migration and analytics practice to AWS S3 and EMR.

About the author

Paul Scott-Murphy, VP Product Management, WANdisco

Paul has overall responsibility for WANdisco’s product strategy, including the delivery of product to market and its success. This includes directing the product management team, product strategy, requirements definitions, feature management and prioritisation, roadmaps, coordination of product releases with customer and partner requirements and testing. Previously Regional Chief Technology Officer for TIBCO Software in Asia Pacific and Japan. Paul has a Bachelor of Science with first class honours and a Bachelor of Engineering with first class honours from the University of Western Australia.

Paul Scott-Murphy

Paul Scott-Murphy

Chief Technology Officer, WANdisco

Paul has overall responsibility for WANdisco’s product strategy, including the delivery of product to market and its success. This includes directing the product management team, product strategy, requirements definitions, feature management and prioritisation, roadmaps, coordination of product releases with customer and partner requirements and testing. Previously Regional Chief Technology Officer for TIBCO Software in Asia Pacific and Japan. Paul has a Bachelor of Science with first class honours and a Bachelor of Engineering with first class honours from the University of Western Australia.